TechDays Online 2017 Bot Framework / Cognitive Services now available

This February saw the return of TechDays Online here in the UK, along with other sessions from across the pond in the U.S. I co-presented 2 sessions on bot framework development along with Simon Michael from Microsoft and fellow MVP James Mann. The sessions covered some great advice about bot development and dug a little deeper into subjects including FormFlow and the QnA Maker / LUIS cognitive services.

Both sessions are now available to watch online, along with tons of other great content from the rest of the 3 days.

Conversational UI using the Microsoft Bot Framework

Microsoft Bot Framework and Cognitive Services: Make your bot smarter!

Another fellow MVP, Robin Osborne, also recorded some short videos about his experience in building a real world bot for a leading brand, JustEat, so check them out over on his blog too.

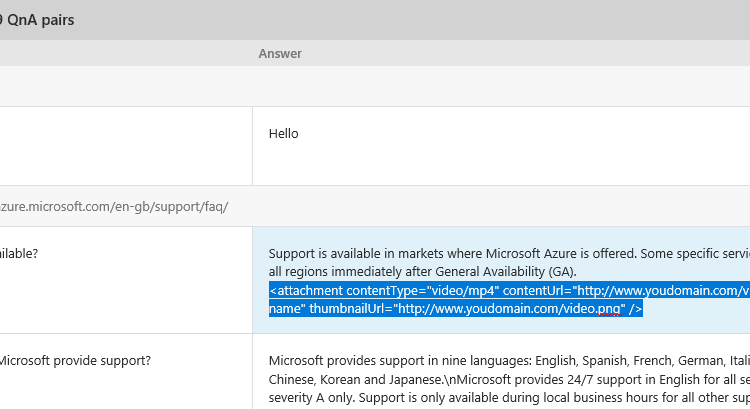

Adding rich attachments to your QnAMaker bot responses

Recently I released a dialog, available via NuGet, called the QnAMaker dialog. This dialog allows you to integrate with the QnA Maker service from Microsoft, part of the Cognitive Services suite, which allows you to quickly build, train and publish a question and answer bot service based on FAQ URLs or structured lists of questions and answers.

Today I am releasing an update to this dialog which allows you to add rich attachments to your QnAMaker responses to be served up by your bot. For example, you might want to provide the user with a useful video to go along with an FAQ answer. Read More

QnA Maker Dialog for Bot Framework

Update: The QnA Maker Dialog v3 is now available. It adds support for v3 of the Microsoft QnA Maker API, including returning multiple answers and use of metadata to filter / boost answers that are returned. You can read more about this and a new QnA Maker Sync library that is now also available on the announcement blog here. Also, I have previously released an update to the QnAMakerDialog which supports adding rich media attachments to your Q&A responses.

The QnA Maker service from Microsoft, part of the Cognitive Services suite, allows you to quickly build, train and publish a question and answer bot service based on FAQ URLs or structured lists of questions and answers. Once published you can call a QnA Maker service using simple HTTP calls and integrate it with applications, including bots built on the Bot Framework.

Right now, out of the box, you will need to roll your own code / dialog within your bot to call the QnA Maker service. The new QnAMakerDialog which is now available via NuGet aims to make this integration even easier, by allowing you to integrate with the service in just a couple of minutes with virtually no code.

The QnAMakerDialog allows you to take the incoming message text from the bot, send it to your published QnA Maker service and send the answer sent back from the service to the bot user as a reply. You can add the new QnAMakerDialog to your project using the NuGet package manager console with the following command, or by searching for it using the NuGet Manager in Visual Studio.

Below is an example of a class inheriting from QnAMakerDialog and the minimal implementation.

|

1 2 3 4 5 |

[Serializable] [QnAMakerService("YOUR_SUBSCRIPTION_KEY", "YOUR_KNOWLEDGE_BASE_ID")] public class QnADialog : QnAMakerDialog<object> { } |

When no matching answer is returned from the QnA service a default message, “Sorry, I cannot find an answer to your question.” is sent to the user. You can override the NoMatchHandler method to send a customised response.

For many people the default implementation will be enough, but you can also provide more granular responses for when the QnA Maker returns an answer, but is not confident in the answer (indicated using the score returned in the response between 0 and 100 with the higher the score indicating higher confidence). To do this you define a custom hanlder in your dialog and decorate it with a QnAMakerResponseHandler attribute, specifying the maximum score that the handler should respond to.

Below is an example with a customised method for when a match is not found and also a hanlder for when the QnA Maker service indicates a lower confidence in the match (using the score sent back in the QnA Maker service response). In this case the custom handler will respond to answers where the confidence score is below 50, with any obove 50 being hanlded in the default way. You can add as many custom handlers as you want and get as granular as you need.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

[Serializable] [QnAMakerService("YOUR_SUBSCRIPTION_KEY", "YOUR_KNOWLEDGE_BASE_ID")] public class QnADialog : QnAMakerDialog<object> { public override async Task NoMatchHandler(IDialogContext context, string originalQueryText) { await context.PostAsync($"Sorry, I couldn't find an answer for '{originalQueryText}'."); context.Wait(MessageReceived); } [QnAMakerResponseHandler(50)] public async Task LowScoreHandler(IDialogContext context, string originalQueryText, QnAMakerResult result) { await context.PostAsync($"I found an answer that might help...{result.Answer}."); context.Wait(MessageReceived); } } |

Hopefully you will find the new QnAMakerDialog useful when building your bots and I would love to hear your feedback. The dialog is open source and available in my GitHub repo, along side the other additional dialog I have created for the Bot Framework, BestMatchDialog (also available on NuGet).

I will be publishing a walk through of creating a service with the QnA Maker in a separate post in the near future, but if you are having trouble with that, or indeed the QnAMakerDialog, in the mean time then please feel free to reach out.

Building conversational forms with FormFlow and Microsoft Bot Framework – Part 2 – Customising your form

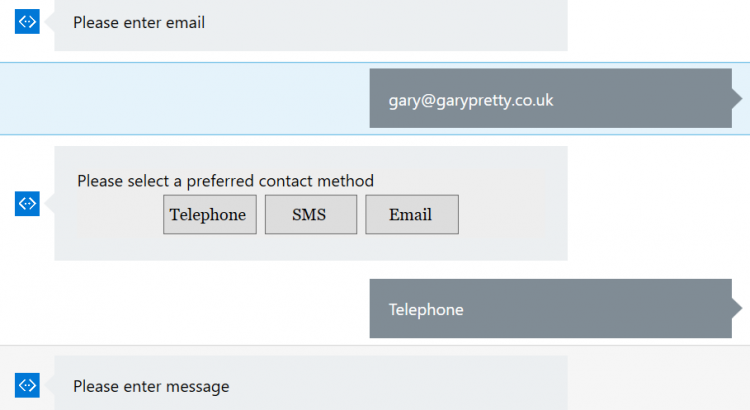

In my last post I gave an introduction to FormFlow (Building conversational forms with FormFlow and Microsoft Bot Framework – Part 2), part of the Bot Framework which allows you to create conversational forms automatically based on a model and allows you to take information from a user with many of the complexities, such as validation, moving between fields and confirmation steps handled for you. At this point if you have not read the last post I encourage you to give it a quick read now as this post follows on directly from that.

As promised, in this post we will dig further into FormFlow and how you can customise the form process and show you how you can change prompt text, the order in which fields are requested from the user and concepts like conditional fields.

Building conversational forms with FormFlow and Microsoft Bot Framework – Part 1

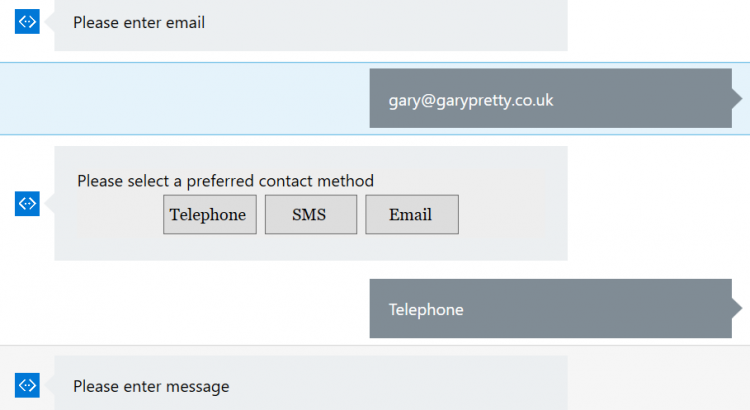

Forms are common. Forms are everywhere. Forms on web sites and forms in apps. Forms can be complicated – even the simple ones. For example, when a user completes a contact form they might provide their name, address, contact details, such as email and telephone, and their actual contact message. We have multiple ways that we might take that information, such as drop down lists or simply free text boxes. Then there is the small matter of handling validation as well, required fields, fields where the value needs to be from a pre-defined set of choices and even conditional fields where if they are required is determined by the user’s previous answers.

So, what about when we need to get this type of information from a user within the context of a bot? We could build the whole conversational flow ourselves using traditional bot framework dialogs, but handling a conversation like this can be really complex. e.g. what if the user wants to go back and change a value they previously entered? The good news is that the bot framework has a fantastic way of handling this sort of guided conversation – FormFlow. With FormFlow we can define our form fields and have the user complete them, whilst getting help along the way.

In this post I will walk through what is needed to get a basic form using FormFlow working.

Making Amazon Alexa smarter with Microsoft Cognitive Services

Recently those of us who work at Mando were lucky enough to receive an Amazon Echo Dot for us to start to play with and to see if we could innovate with them in any interesting ways and as I have been doing a lot of work recently with the Microsoft Bot Framework and the Microsoft Cognitive Services, this was something I was keen to do. The Echo Dot, hardware that sits on top of the Alexa service is a very nice piece of kit for sure, but I quickly found some limitations once I started extending it with some skills of my own. In this post I will talk about my experience so far and how you might be able to use Microsoft services to make up for some of the current Alexa shortcomings. Read More

Using speech recognition and synthesis in Windows 10 to talk to your bot (and have it talk back!)

In my previous posts I have shown how we can use the Microsoft Bot Framework to create smart, intelligent bots that users can interact with using natural language, which is interpreted using the LUIS service (part of Microsoft Cognitive Services). The bots that we can create using these tools are really powerful but, in most cases users are still typing text when communicating with the bot. This is perfectly ok in most situations, but it would certainly be fitting for some requirements to actually be able to allow he user to just talk to the bot using speech and have the bot respond in the same way. There are examples of where this can be done on the existing bot channels already, such as users using the Slack mobile app with a voice recognizer function so you don;t have to type your text, but what if we wanted to bake this into our own app? In this post I am going to provide some basic code that allows a user to converse with a bot using speech in a Windows 10 UWP app.

Give your bot some ‘manners’ with the BestMatchDialog

As a follow up to my earlier post which introduced the new BestMatch Dialog, now available via NuGet. One of the best uses for the BestMatch Dialog, and the reason I created it in the first place, is adding ‘manners’ to a bot. i.e. being able to respond to those common things that people say that fall outside of the usual conversation you would handle with your bot. Therefore this post will focus on and show how to use a BestMatch Dialog as a child dialog to respond to general messages like “hello” and “thanks”. It is amazing what a huge difference handling these sorts of messages can make and how much more natural talking to your bot will feel for your end users. Read More