Some things are just hard. As a developer, tasks such as recognising a face within a photo or understanding speech are just things that, in general, you cannot do on your own own. These sorts of capabilities though, could significantly transform the experiences you offer in your apps. Imagine being able to write a kiosk app that instantly knew who the user was just by seeing their face or being able to have a user ask a question and understand what they were asking without writing reams and reams on limiting regular expressions. Some time ago at Microsoft a project got underway to solve this problem, codenamed Project Oxford, the idea was simple – bring the power of machine learning that can only be harnessed by an entity of a similar scale to Microsoft, to developers to use anywhere, anytime.

What is Project Oxford?

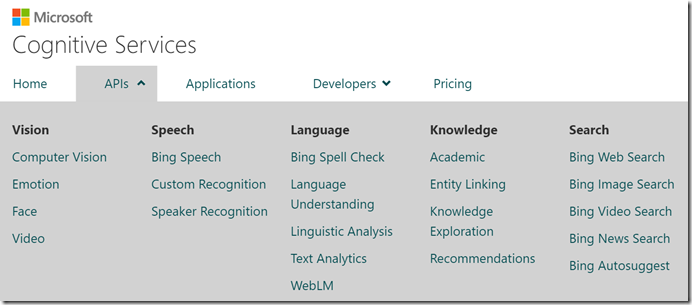

Project Oxford, initially announced at the Build 2015 conference, offered up a number of preview services and APIs to developers to handle things like speech processing, facial recognition and language understanding. Since then Project Oxford has come a long way and has now been renamed, Microsoft Cognitive Services. With an expanded collection of APIs now available (still in preview at the time of writing) the experiences that you can add to your apps is really compelling.

There are too many services to go into in a single post, but here are a few to wet your appetite;

Language Understanding Intelligence Service

LUIS, as it is commonly known, is a service that allows you to accept natural language input from a user and have their intent interpreted for you. For example, a user might say “turn on the lights” or “switch on the lights in this room” – even with this simple example there are numerous ways that a user might say it and if you had to try and interpret it yourself, you would be limited to looking for static words in the content such as “lights” or “on”. LUIS steps in by allowing you to create your own model for your application that defines your list of intents (e.g. Turn Lights On or Turn Lights Off) and then provide it with a list of example utterances – the phrases a user might use to instigate that action. Once LUIS has a few example utterances for each intent you have defined you can then have it automatically train your model, which once completed will hopefully allow the service to take a phrase like “switch on the light”, which it has never heard before, and correctly predict what the user is trying to do. Better still, as more requests are submitted to the service, it can continue to re-train your model for you, helping it to improve results and you can manually check some of the predictions and correct them if necessary.

The LUIS service is already being used a great deal with other platforms, such as the Microsoft Bot Framework and I will be exploring this service further in a future post very soon, where I will show you how to make a bot more intelligent using LUIS (If you haven’t built you first bot yet then check out my two part tutorial on creating one with C# and the Bot Framework).

Emotion API

The Emotion API can take images containing faces as input and tell you what sort of emotion it thinks the facial expression is conveying. Right now the service can try and detect the following emotions;

- anger

- contempt

- disgust

- fear

- happiness

- sadness

- surprise

- neutral

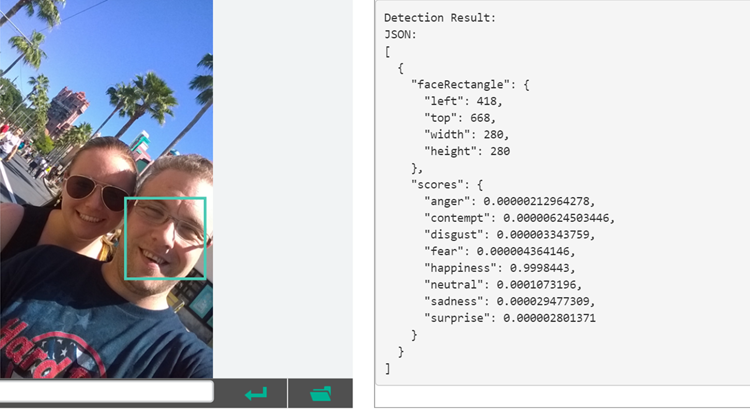

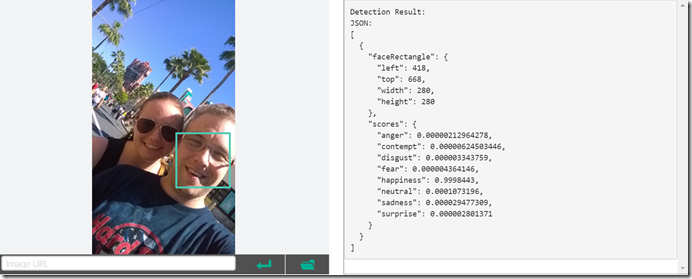

When an image is supplied the service returns a response that contains a list of faces that it has detected, including their bounding coordinates within the image, along with a probability associated with each emotion as to how likely it is that the face is conveying it, Take the example below, (on the Emotion API web page which lets you upload your own photo to test the service – very cool!) where I am in my favorite holiday destination, Walt Disney World. In this photo the service has correctly identified one of the two faces in the image – it isn’t perfect – but has correctly identified that I am happy with a 99.98% probability.

This service provides huge potential, especially in the area of sentiment analysis. We have for some time now had services available that would recognise sentiment in text, in a Twitter feed for example and the Emotion API opens to door to this for everyone with images.

Speaker Recognition

The Speaker Recognition API has a couple of interesting angles for speech recognition. Firstly, you can use the API to identify who is speaking on a given audio recording, from a list of known people / voices. In the example on the Cognitive Services site, the voices of a number of US Presidents have been enrolled, the process of initially giving the service an example of how someone speaks along with their identity. Then you are able to submit one of a number of sample audio samples from each of the Presidents, or upload your own and allow the service to tell you which one it is.

The API can also take this a step further beyond simply identifying a known person from a recording, it can verify a voice matches a specific identity. The Speaker Verification part of the API allows you to specify who you are looking to match along with the voice recording and have the API indicate if this is a match. This could be really useful in a two factor authentication scenario.

If you think about this, this API is pretty exciting as we can now unlock many possible scenarios. For example, we might have a kiosk app that is placed in a factory that can be used by the staff to get information relevant to their job role, such as a list of current tasks. Previously they might have needed to login to the kiosk to ensure they got information that was relevant to them, but now they can be recognised automatically using the API in the background.

Pricing and general availability

At the moment, most of the Cognitive Services are still in preview (with the exception of the Bing APIs, including Auto Suggest and Spell Check APIs, which were brought out of preview in the last few days https://azure.microsoft.com/en-us/updates/general-availability-microsoft-cognitive-services-bing-apis/), but look to be close to general availability as there are now preview pricing tiers that allow you to use the services immediately. The great news is that not only do the services generally have a free tier, up to 10,000 transactions per month in the case of the LUIS service for example, but they seem to be very reasonably priced at scale. For every 1000 transactions in preview for the LUIS service (outside of the 10,000 free) you pay just $0.75! In terms of pricing when these services are generally available, Microsoft have indicated that the preview prices are approximately a 50% discount on the likely final prices. Still, that’s only $1.50 for interpreting 1000 user phrases – bargain!

Summary / where next?

The truth is, you can barely scratch the surface of what Cognitive Services have the offer in a single blog post, but you should at least now have the general idea of what they are and the sort of tasks you can achieve with them. Why not head on over to the official web site and explore some of the other services on offer as part of the suite. In future posts on this blog I am going to dive into some of the services and show you how to implement them in your apps, including a post very soon about using the LUIS service as part of a Microsoft Bot Framework bot to really have a natural conversation with your users. Subscribe to the RSS or follow me on Twitter @garypretty for updates when new posts are published.

What do you think of Microsoft Cognitive Services? Do you think you will use any of them in your solutions? I would love to hear your feedback so hit the comments if you have something to say.