Category: Cognitive Services

Making Amazon Alexa smarter with Microsoft Cognitive Services

Recently those of us who work at Mando were lucky enough to receive an Amazon Echo Dot for us to start to play with and to see if we could innovate with them in any interesting ways and as I have been doing a lot of work recently with the Microsoft Bot Framework and the Microsoft Cognitive Services, this was something I was keen to do. The Echo Dot, hardware that sits on top of the Alexa service is a very nice piece of kit for sure, but I quickly found some limitations once I started extending it with some skills of my own. In this post I will talk about my experience so far and how you might be able to use Microsoft services to make up for some of the current Alexa shortcomings. Read More

Integrating a LUIS natural language model with your bot using LUISDialog

The LUIS service, part of the Cognitive Services suite, aids you with the task of natural language processing. In my last post I created a natural language model using Microsoft’s LUIS service and in this post I am going to show you how to hook up the model I created, into a bot created using the Bot Framework and a special type of dialog class, the LUIS Dialog. If you haven’t got a LUIS model already, go back and work through the last post, it really doesn’t take long.

Update: I have now published a quick video overview of LUIS including how to create your first model.

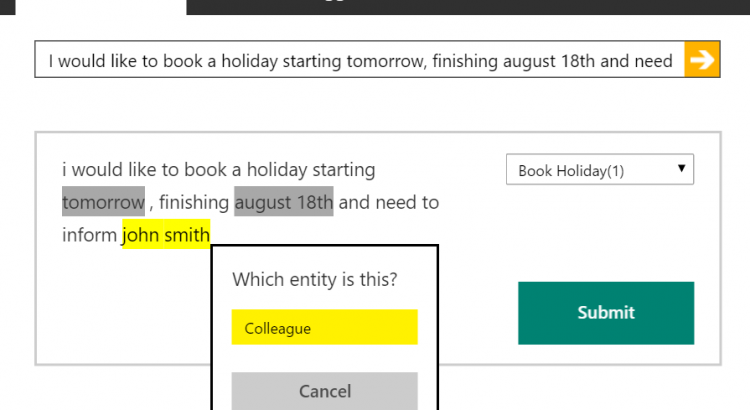

Using the Microsoft LUIS service to build a model to understand natural language input

LUIS, the Language Understanding Intelligence Service, is a part of Microsoft’s Cognitive Services suite. If you are reading this and you don’t yet have a clear understanding of what Cognitive Services are, head over and read my introduction to Cognitive Services before you continue.

During this post I will introduce you to what the LUIS service is and show you how to build a model to aid in natural language understanding in your applications.

Update: I have now published a quick video overview of LUIS including how to create your first model. Read More

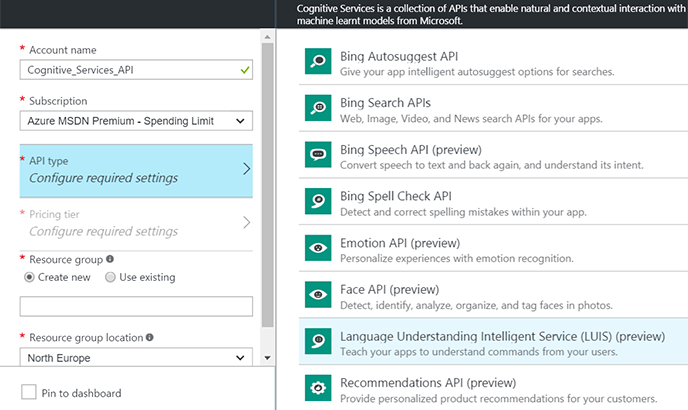

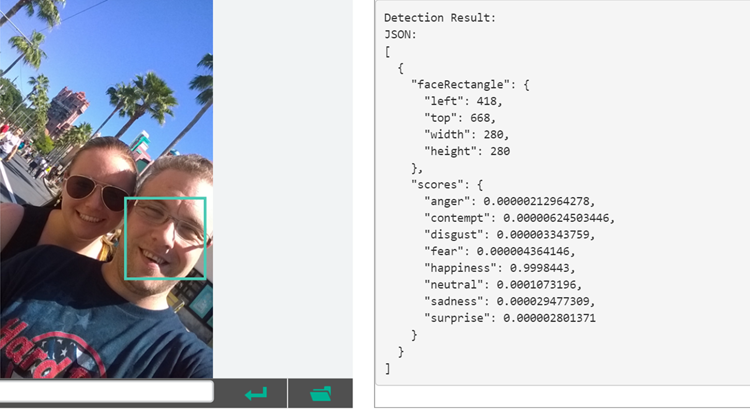

What are Microsoft’s Cognitive Services?

Some things are just hard. As a developer, tasks such as recognising a face within a photo or understanding speech are just things that, in general, you cannot do on your own own. These sorts of capabilities though, could significantly transform the experiences you offer in your apps. Imagine being able to write a kiosk app that instantly knew who the user was just by seeing their face or being able to have a user ask a question and understand what they were asking without writing reams and reams on limiting regular expressions. Some time ago at Microsoft a project got underway to solve this problem, codenamed Project Oxford, the idea was simple – bring the power of machine learning that can only be harnessed by an entity of a similar scale to Microsoft, to developers to use anywhere, anytime.